Why Microsoft Stock Price Is Cheap Given Their AI Ambitions — And Why That's the Wrong Question

Barron's made waves recently arguing that Microsoft stock is cheap given their AI pipeline, pointing to a valuation that appears compressed relative to the company's long-term artificial intelligence revenue potential. The framing is compelling for equity investors — but for enterprise technology leaders, it invites entirely the wrong line of inquiry. Cheap relative to what benchmark? Cheap compared to Alphabet's forward P/E? Cheap against a discounted cash flow model that assumes Copilot reaches 50% enterprise penetration by 2027? The answer shapes very different decisions depending on whether you're managing a portfolio or managing a technology stack.

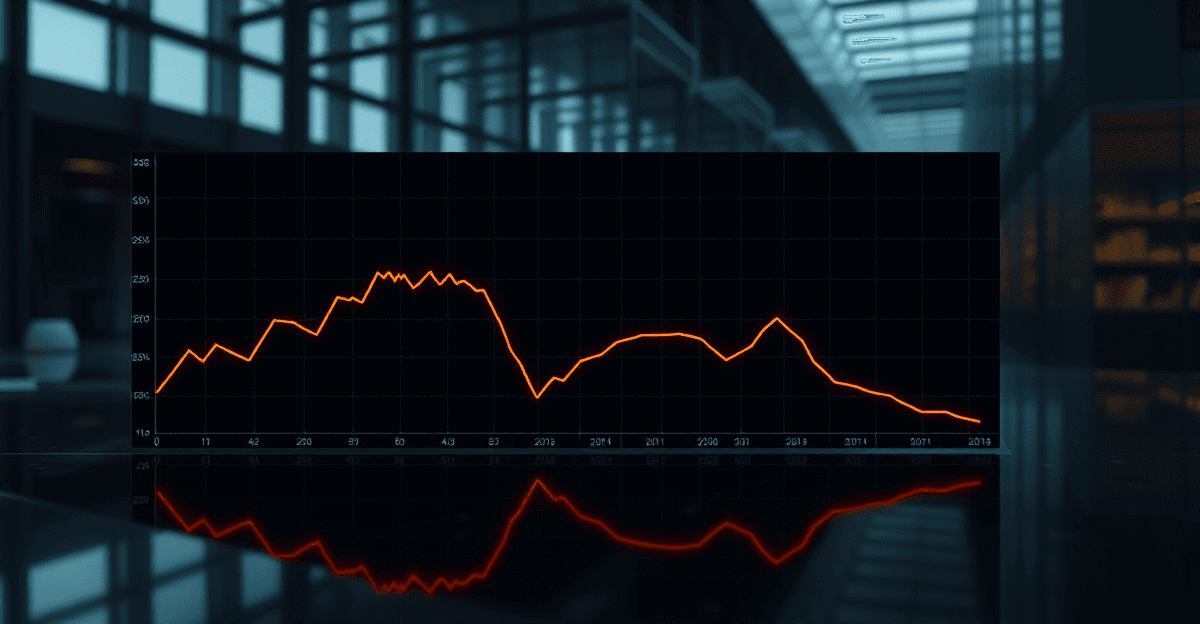

The broader context matters here. Microsoft's stock price pullback isn't an isolated event — it reflects sector-wide anxiety about artificial intelligence monetization timelines. Mega-cap stocks across the AI landscape, from Alphabet to Amazon, have faced similar compression as institutional investors grow impatient with the gap between AI capital expenditure and recognized revenue. Microsoft has committed over $80 billion in AI infrastructure spending for fiscal year 2025 alone, yet enterprise activation rates for its flagship Copilot product remain stubbornly low in many organizations. That tension is what the market is pricing.

For technology decision-makers, the critical discipline is separating stock price sentiment from platform maturity signals. A declining share price doesn't mean Azure OpenAI is technically inferior — it means investors are recalibrating their timeline assumptions. Conversely, a rising stock price doesn't validate your organization's AI deployment strategy. These are distinct signals, and conflating them leads to procurement decisions driven by financial media narratives rather than operational reality.

The Mega-Cap AI Battle: Microsoft, Alphabet, and Amazon Web Services

The competitive landscape among the three dominant enterprise AI hyperscalers is more nuanced than headline comparisons suggest. Microsoft's Azure OpenAI Service benefits from deep integration with the Microsoft 365 ecosystem, making it the default gravity well for enterprises already running Teams, SharePoint, and Dynamics. Alphabet's Gemini stack, meanwhile, has made aggressive inroads in organizations with heavy Google Workspace adoption and offers compelling multimodal capabilities that are increasingly relevant for industries like healthcare imaging and legal document analysis. Amazon Web Services' Bedrock platform takes a deliberately model-agnostic approach, allowing enterprises to deploy models from Anthropic, Meta, Mistral, and others under a single API layer — a significant architectural advantage for organizations wary of model lock-in.

The Barron's Roundtable has signaled Microsoft is primed for the AI battle, and from a pure ecosystem integration standpoint, that assessment has merit. But enterprise procurement teams need a vendor-agnostic view that financial analysts rarely provide. Microsoft wins on workflow integration and identity management. Alphabet wins on data analytics depth and competitive pricing for inference at scale. AWS wins on infrastructure flexibility and the breadth of its model marketplace. No single vendor dominates every use case, and the enterprises that recognize this are negotiating better contracts and building more resilient architectures than those that simply follow the financial media's preferred narrative.

Broader concerns about artificial intelligence disrupting existing software contracts are also reshaping enterprise AI budgets in ways that create both hesitation and opportunity. Organizations that signed multi-year Microsoft Enterprise Agreements before the Copilot era are now facing awkward conversations about seat licensing, usage rights, and whether their existing contracts actually cover the AI features their business units are already using. This ambiguity is creating a window for competitive displacement — and for organizations willing to do the hard work of auditing their current licensing position, meaningful cost savings are available.

What Artificial Intelligence Disrupting Legacy Software Means for Your Stack

Microsoft's Copilot suite represents one of the most interesting examples of a technology company deliberately cannibalizing its own product lines in the name of AI transformation. Copilot for Power Automate is reducing the need for manual flow configuration. Copilot in Excel is compressing the demand for specialized financial modeling consultants. Copilot in Teams is beginning to erode the market for standalone meeting intelligence tools like Otter.ai and Fireflies. Understanding which workflows in your organization are being cannibalized versus genuinely enhanced is not a theoretical exercise — it has direct implications for your software renewal decisions over the next 18 months.

The no-code and low-code segment sits at the epicenter of this disruption. Enterprises that invested heavily in building operational workflows on platforms like Power Apps, Bubble, or Webflow over the past three to five years now face a difficult question: are those implementations still the right foundation, or have AI-native alternatives already leapfrogged them in capability? The honest answer is that many no-code implementations were built to solve problems that AI agents can now address more elegantly — but migrating away from a working, if suboptimal, system requires expertise that most internal IT teams don't have bandwidth for.

This is precisely the gap that RevolutionAI's no-code rescue practice was designed to address. Stalled implementations — the Power Platform deployments that never reached production, the Bubble apps that outgrew their architecture, the automation workflows that worked in the demo but broke under real data volumes — are accumulating across the enterprise landscape at an accelerating rate. Rescuing these investments before the next wave of AI-native tooling makes them permanently obsolete isn't just a cost-saving measure; it's a strategic necessity for organizations that want to enter the AI-native era with clean architectural foundations rather than compounding technical debt.

AI Security and the Hidden Risks in Microsoft's Valuation Outlook

When analysts discuss risks and valuation outlook for Microsoft, they typically focus on revenue concentration, competition from Alphabet and AWS, and macroeconomic sensitivity in enterprise software spending. What they rarely quantify — and what enterprise CISOs are increasingly losing sleep over — is the security liability embedded in rapid AI deployment at scale. Every new AI surface area is a new attack surface, and Microsoft's expanding AI footprint is creating vulnerabilities that traditional security frameworks weren't designed to address.

Microsoft's AI ecosystem — spanning Copilot across Microsoft 365, Azure AI services, and the Power Platform — introduces a category of risks that are qualitatively different from conventional software vulnerabilities. Prompt injection attacks, where malicious content in documents or emails manipulates Copilot's behavior to exfiltrate data or execute unauthorized actions, are already being documented in enterprise environments. Data leakage through overly permissive Copilot configurations — where the AI surfaces documents that employees technically have access to but were never intended to see — is a governance problem that most organizations haven't fully inventoried. Model governance, including questions about which AI models are processing which categories of sensitive data, remains a blind spot in most enterprise AI programs.

A structured AI security audit — not the compliance checkbox exercise that passes for security review in many organizations — is the gap most enterprises miss when evaluating the total cost of AI ownership. RevolutionAI's AI security solutions practice approaches this from an adversarial mindset rather than a compliance mindset, identifying the specific attack vectors relevant to your Microsoft AI deployment and building controls that address real threat models rather than regulatory checklists. In an environment where a single prompt injection incident can result in regulatory exposure, reputational damage, and breach notification obligations, this is not an optional investment.

From POC to Production: Why Most Enterprise AI Investments Stall

The broader concerns about artificial intelligence ROI that are suppressing Microsoft's stock price and creating skepticism in enterprise boardrooms don't primarily stem from model quality limitations. The models are, by any reasonable measure, remarkable. The stall point is almost always the proof-of-concept to production transition — the gap between a compelling demo in a controlled environment and a reliable, governed, measurable business process running at scale. Industry analysts estimate that between 60% and 80% of enterprise AI proof-of-concepts never reach production deployment. That statistic is not a model problem. It's an implementation problem.

Microsoft's stock price pullback reflects investor skepticism about enterprise AI monetization timelines, and that skepticism mirrors friction that is very real on the ground. The friction has several consistent sources: data quality issues that weren't apparent in the POC phase, integration complexity with legacy systems that the demo never touched, change management resistance from the business units the AI was supposed to serve, and governance requirements that emerged from legal and compliance teams after the technical work was already done. Each of these is solvable — but solving them requires a different skill set than building the initial proof of concept.

RevolutionAI's POC development and managed AI services practice is specifically designed to collapse the gap between demo success and measurable business value. This means building proofs of concept that are explicitly designed for production promotion from day one — with data pipelines that reflect real-world data quality, integration patterns that account for legacy system constraints, and governance frameworks that satisfy legal and compliance requirements before they become blockers. For organizations that already have stalled POCs, our managed services practice provides the ongoing operational support needed to get those investments across the production threshold.

HPC Infrastructure: The Underreported Driver Behind Microsoft's AI Moat

One dimension of Microsoft's competitive position that stock price analysis from Barron's and Yahoo Finance consistently underweights is the structural advantage created by its capital expenditure on high-performance computing hardware and custom silicon. Microsoft's Maia AI accelerator and Cobalt CPU represent a multi-year bet on reducing inference costs and improving performance for specific workload categories — an investment that, if successful, will compound Microsoft's cost structure advantage over enterprises running AI workloads on commodity GPU infrastructure. This is the kind of moat that doesn't show up in quarterly earnings but shapes competitive dynamics over a five to ten year horizon.

Enterprises building private AI infrastructure face the same fundamental HPC design decisions at a smaller scale, and the consequences of getting those decisions wrong are significant. GPU cluster architecture choices made today will determine inference costs and throughput capacity for the next three to five years. Interconnect strategy — the networking fabric that allows GPUs to communicate during training and inference — is frequently underspecified in enterprise AI infrastructure projects, resulting in bottlenecks that are expensive to remediate after deployment. Cooling costs, which can represent 30-40% of total data center operating expense for GPU-dense configurations, are rarely modeled accurately in initial business cases.

RevolutionAI's HPC hardware design consulting helps mid-market organizations navigate these decisions with the same rigor that hyperscalers apply to their own infrastructure investments. The goal is not to replicate Microsoft's infrastructure — that's neither feasible nor necessary for most organizations. The goal is to build AI infrastructure that scales predictably, avoids the most common architectural mistakes, and doesn't create dependencies on a single hyperscaler's roadmap that become expensive to unwind. For organizations evaluating whether to build, buy, or hybrid their AI infrastructure, this expertise is the difference between a strategic asset and a capital sink.

Actionable AI Strategy: What to Do While Microsoft Stock Finds Its Floor

The market uncertainty window created by Microsoft's stock price volatility is, counterintuitively, one of the best moments to conduct a rigorous audit of your current Microsoft AI licensing spend. Many enterprises are paying for Copilot seats at $30 per user per month with activation rates below 20% — meaning they're spending significant budget on licenses that are generating no measurable value. This is not a technology failure; it's a change management and deployment failure. Identifying which Copilot deployments are underperforming and understanding why is the first step toward either fixing them or reallocating that budget to higher-ROI AI investments.

Multi-cloud AI strategy deserves serious evaluation now, before your next Microsoft Enterprise Agreement renewal. Alphabet and AWS are aggressively pricing competing AI services, and enterprises that have over-indexed on Microsoft without maintaining competitive alternatives are entering renewal negotiations from a position of weakness. This doesn't mean abandoning Microsoft's ecosystem — for many organizations, the integration depth genuinely justifies premium pricing. It means having a credible alternative that your procurement team can reference. The enterprises getting the best Microsoft AI pricing right now are the ones that have done the work to understand what Gemini or Bedrock would cost them as an alternative.

Engaging an AI consulting partner to build a vendor-agnostic AI roadmap is the highest-leverage action most enterprise technology leaders can take in the current environment. The roadmap should capture Microsoft's genuine strengths — particularly in workflow integration, identity, and the Microsoft 365 ecosystem — while explicitly hedging against its execution risks, security vulnerabilities, and the infrastructure lock-in that comes with deep Azure OpenAI dependency. RevolutionAI's consulting practice is built around exactly this kind of vendor-agnostic strategic advisory, combining technical depth across the major hyperscaler platforms with operational experience in the specific failure modes that cause enterprise AI programs to stall. You can explore our full range of capabilities and pricing to find the engagement model that fits your organization's stage and scale.

Conclusion: The Real AI Investment Decision Isn't on the Stock Market

Microsoft's stock price will find its floor. The market will eventually resolve its uncertainty about AI monetization timelines, and Microsoft's genuine structural advantages — its distribution reach, ecosystem integration depth, and HPC infrastructure investments — will likely be reflected in its valuation. For equity investors, the timing of that resolution is the critical question.

For enterprise technology leaders, the timing question is different and more urgent: how do you build an AI program that delivers measurable business value in the next 12 to 18 months, regardless of where Microsoft's stock price lands? The answer requires clear-eyed assessment of your current AI licensing spend, honest evaluation of your stalled proof-of-concepts, serious attention to the security vulnerabilities in your AI deployment, and a vendor-agnostic roadmap that captures the best of what Microsoft, Alphabet, and AWS each offer without inheriting the worst of any single platform's risks.

The organizations that will lead in the AI era are not the ones that made the best stock picks. They're the ones that made the best implementation decisions — and those decisions are available to any enterprise willing to do the strategic work now, while their competitors are still waiting for the market to tell them what to do.

Frequently Asked Questions

Why has Microsoft stock price dropped despite strong AI investments?

Microsoft stock price has faced downward pressure because institutional investors are recalibrating their timeline assumptions around AI monetization, not because the underlying technology is failing. The company has committed over $80 billion in AI infrastructure spending for fiscal year 2025, but enterprise activation rates for Copilot remain low, creating a visible gap between capital expenditure and recognized revenue. Markets are pricing that tension, not passing judgment on Azure OpenAI's technical capabilities.

Is Microsoft stock cheap compared to Alphabet and Amazon right now?

Whether Microsoft stock price appears cheap depends entirely on the valuation framework you apply and the timeline assumptions you build into it. Relative to forward P/E comparisons with Alphabet or discounted cash flow models projecting Copilot penetration, the stock may look compressed. However, enterprise technology leaders should treat stock price signals as investor sentiment data, not as validation of any vendor's platform maturity or deployment readiness.

How does Microsoft's AI strategy compare to AWS and Google in the enterprise market?

Microsoft leads on workflow integration and identity management through its deep Microsoft 365 ecosystem, making it the natural default for enterprises already running Teams, SharePoint, and Dynamics. Alphabet's Gemini stack offers strong multimodal capabilities and competitive inference pricing, while AWS Bedrock provides a model-agnostic architecture that reduces vendor lock-in risk. No single hyperscaler dominates every enterprise use case, and procurement teams benefit from maintaining a vendor-neutral evaluation framework.

When will Microsoft's AI investments start showing up in revenue growth?

Analysts and institutional investors are actively debating this timeline, which is precisely why Microsoft stock price has experienced sector-wide compression alongside Alphabet and Amazon. The gap between AI capital expenditure and recognized revenue remains the central uncertainty for equity markets heading into 2025 and 2026. Enterprise adoption curves for products like Copilot will be the leading indicator to watch, as broad activation rates would signal accelerating monetization.

Should enterprise technology decisions be influenced by Microsoft's stock performance?

No — conflating stock price sentiment with platform maturity signals is one of the most common and costly mistakes enterprise technology leaders make. A declining Microsoft stock price does not indicate that Azure OpenAI is technically inferior, just as a rising price does not validate your organization's AI deployment strategy. Procurement decisions should be grounded in operational requirements, contract terms, and architectural fit rather than financial media narratives.

What risks should enterprises consider when expanding Microsoft AI contracts?

Organizations that signed multi-year Microsoft Enterprise Agreements before the Copilot era face complex questions around seat licensing, usage rights, and whether existing contracts cover new AI features. The broader concern about AI disrupting legacy software contracts is reshaping enterprise budgets and creating both hesitation and renegotiation opportunities. Technology leaders should audit their current agreements carefully before assuming AI capabilities are included or before committing to expanded Microsoft licensing.