What Happened: Four Army Drone Systems Stolen from Kentucky Base

In a breach that sent shockwaves through military security circles, four Army drone systems were stolen from Fort Campbell, the Kentucky base home to the 101st Airborne Division and numerous specialized units including the 326th Division Engineer battalion. The Army Criminal Investigation Command (CID) quickly became involved, launching a formal investigation into what appeared to be a deliberate, coordinated theft of high-value military assets. Suspects were identified relatively quickly — a fact that, while reassuring on the surface, actually highlights a deeper problem: the Army could identify suspects after the fact, but the systems in place failed to prevent the theft entirely.

The incident prompted a $5,000 reward offer for information leading to the recovery of the stolen drone systems — a detail that might seem minor but speaks volumes about the state of military asset recovery. When a branch of the armed forces with access to cutting-edge technology resorts to a reward poster-style approach to recovering stolen hardware, it signals that reactive, manual processes still dominate the recovery pipeline. Searches for terms like "drones from Fort Campbell," "allegedly stole drones," and "four Army drone systems stolen from Kentucky base" spiked significantly in the wake of the incident, reflecting public demand for answers that go beyond arrest and conviction details. People want to understand how this happened — and whether it can happen again.

What this incident reveals is not simply a lapse in physical security at one installation. It exposes a systemic gap between the sophistication of the hardware being protected and the sophistication of the systems protecting it. Fort Campbell is not a low-security facility. Yet the breach window was wide enough for multiple drone systems to leave the installation undetected in real time. That gap is precisely where AI-powered security should be operating — and currently isn't.

Why Military Drone Security Is an AI Problem, Not Just a Physical One

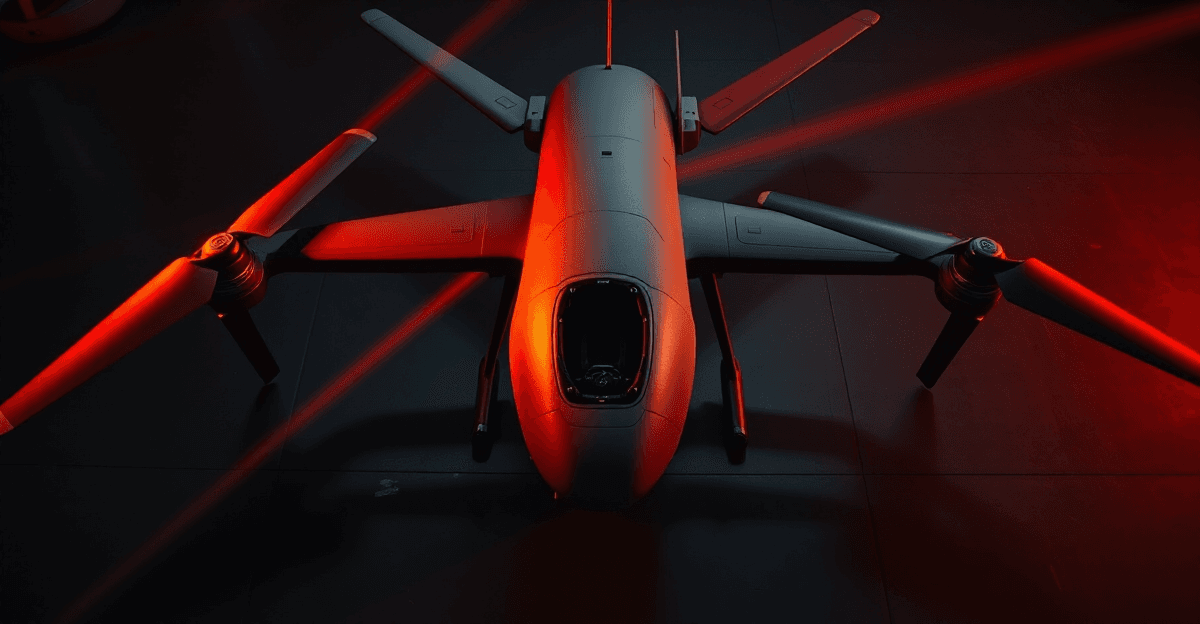

Modern military drone systems are not just flying machines. They are mobile data centers. Embedded firmware, encrypted flight logs, sensor telemetry, navigation algorithms, and in some cases AI-assisted targeting software all live inside the hardware. When those systems are stolen, the theft isn't just of physical assets — it's of AI-readable intelligence that adversaries, criminal organizations, or even curious reverse-engineers can potentially extract and exploit. The Fort Campbell drone theft isn't a property crime. It's a potential intelligence breach.

Physical perimeter breaches at facilities like Fort Campbell signal something specific from an AI security standpoint: a failure in anomaly detection and real-time surveillance integration. Modern AI-assisted CCTV systems can detect unusual movement patterns, flag after-hours access to restricted areas, and cross-reference badge swipe data with physical presence in under a second. The gap between human patrol cycles — which are necessarily periodic — and AI-powered continuous monitoring is precisely the window where incidents like this one occur. A human guard cannot watch every corridor simultaneously. A well-trained computer vision model can.

Army Criminal Investigation processes, while thorough, remain largely manual in their initial triage phases. Investigators review footage, interview witnesses, cross-reference access logs, and build timelines — all valuable, but all time-intensive. AI triage tools trained on historical incident data can compress suspect identification timelines from days to hours. In the Fort Campbell case, suspects were identified relatively quickly, which suggests the evidence trail was not subtle. That raises an uncomfortable question: if the evidence was that accessible after the fact, why didn't automated systems surface it in real time?

The AI Asset Tracking Gap: How Smart Inventory Systems Could Prevent This

AI-powered asset management platforms represent a mature, deployable solution to exactly the kind of vulnerability exposed at Fort Campbell. These systems combine RFID tagging, computer vision checkpoints, and predictive alerting to flag unauthorized removal of assets in real time — not hours or days later. When a tagged drone system moves beyond a predefined geofenced zone without an authorized checkout event logged in the system, an alert fires immediately. No patrol cycle delay. No human oversight gap. The system sees it the moment it happens.

The challenge for military and defense-adjacent organizations is that current logistics systems often predate the AI era entirely. Legacy inventory databases, paper-based checkout logs, and siloed CCTV systems create blind spots that bad actors — whether external intruders or insider threats — can exploit with relative ease. The 326th Division Engineer unit connection in the Fort Campbell case underscores this: insider access makes traditional perimeter security almost irrelevant. If the threat is already inside the wire, the perimeter is not your first line of defense. Your asset tracking layer is.

This is where a no-code AI rescue approach becomes strategically valuable. Organizations don't need to tear out and replace their entire logistics infrastructure to gain AI-powered visibility. Retrofit solutions can layer intelligent monitoring on top of existing systems — connecting legacy databases to AI anomaly detection engines, adding computer vision checkpoints at key exit points, and integrating predictive alerting into existing security operations center workflows. RevolutionAI's POC development services are specifically designed for this scenario: rapid prototyping of AI asset tracking solutions that prove value in weeks, not months, without requiring full infrastructure overhauls. Our managed AI services then provide the ongoing monitoring and model retraining that keeps those systems sharp as threat patterns evolve.

Drone Technology and AI Security: A Dual-Use Risk Organizations Must Address

The Fort Campbell drone theft illustrates what security professionals call dual-use risk — and it applies far beyond military contexts. Stolen military drone systems carry two distinct threat vectors: the hardware itself can be repurposed for surveillance, smuggling, or physical attacks, and the embedded AI software — navigation algorithms, sensor fusion models, targeting logic — can potentially be reverse-engineered to understand military capabilities or replicate them in adversarial systems. This isn't theoretical. Defense researchers have documented cases of reverse-engineered drone components appearing in foreign military programs.

For enterprise and commercial drone fleet operators, the scale is smaller but the vulnerability profile is remarkably similar. A stolen commercial delivery drone carries GPS route data, payload manifests, and potentially API credentials for fleet management platforms. A stolen agricultural drone holds field mapping data, crop health analytics, and operational schedules. The data value of these devices often exceeds their hardware replacement cost — and most organizations have no remote kill-switch capability, no behavioral monitoring layer, and no geofencing enforcement that would flag an unauthorized removal.

AI security frameworks must evolve to treat physical device theft as a primary attack vector, not an afterthought. Three specific layers every drone program should implement: encryption-at-rest for all onboard data, remote kill-switch protocols that can brick a device the moment it's flagged as missing, and AI behavioral monitoring that establishes a baseline of normal device operation and alerts on deviations. Our AI security solutions practice is built around exactly this kind of layered defense model — addressing the full threat surface rather than treating network security and physical security as separate disciplines.

Lessons from the 326th Division Engineer Incident for Enterprise AI Deployments

The connection to the 326th Division Engineer unit in the Fort Campbell drone theft is perhaps the most instructive element of the entire incident for enterprise security leaders. High-security environments — military installations, data centers, research facilities — invest heavily in keeping external threats out. They invest far less in monitoring the behavior of authorized insiders. The result is a predictable vulnerability: the most dangerous actor in a secure facility is often someone who already has legitimate access.

AI-driven insider threat detection addresses this gap directly. These systems don't surveil employees in a punitive sense — they establish behavioral baselines and surface statistical anomalies. An authorized user who suddenly begins accessing drone storage areas at 2:00 AM, checking out equipment outside normal operational windows, or accessing inventory records for assets outside their assigned unit triggers a risk score elevation, not an immediate accusation. The system creates an early warning signal that human security personnel can then investigate with context. This is the difference between catching a problem before it becomes a theft and launching a CID investigation after the fact.

Enterprises deploying AI hardware — edge computing devices, autonomous robots, commercial drone fleets — should adopt zero-trust physical security models that mirror the zero-trust architectures now standard in cybersecurity. Zero-trust physical security means no device movement is implicitly trusted, every access event is logged and analyzed, and authorization is continuous rather than one-time. Combined with HPC hardware design principles that build tamper-awareness and geofencing directly into device firmware, organizations can create ecosystems where stolen hardware is immediately identifiable, remotely disabled, and forensically traceable. RevolutionAI's AI consulting services team works with organizations to design these architectures from the ground up — or retrofit them onto existing deployments.

Building a Proactive AI Security Posture: Actionable Steps for Technology Leaders

The Fort Campbell incident is a case study in reactive security. The response was professional and ultimately effective in identifying suspects — but the fundamental posture was reactive. For technology leaders responsible for AI hardware deployments, the lesson is clear: waiting for a breach to expose your gaps is not a strategy. Here are five concrete steps to shift from reactive to proactive:

1. Conduct an AI asset inventory audit. Catalog every intelligent device in your organization — drones, edge computing nodes, robotics systems, autonomous vehicles — along with its data access level, current physical security protocol, and recovery capability if stolen. Most organizations discover significant gaps in this exercise alone.

2. Implement real-time geofencing and remote deactivation. Every mobile AI hardware asset should have a defined operational boundary and a remote kill capability. If a device leaves its authorized zone without a logged authorization event, it should trigger an immediate alert and, depending on sensitivity classification, initiate automatic deactivation.

3. Deploy AI-powered CCTV and access-log analysis. Modern computer vision systems can monitor facility access points continuously, cross-reference badge events with physical presence, and flag anomalies in real time. This moves your security posture from the reactive investigation model seen at Fort Campbell to predictive prevention.

4. Run tabletop breach simulations that include physical theft scenarios. Most cybersecurity tabletop exercises focus exclusively on network intrusion, ransomware, and data exfiltration. Physical device theft scenarios — including insider threat vectors — should be standard components of security planning. Partner with an AI security solutions consulting firm that understands both the digital and physical threat surface.

5. Leverage no-code rescue frameworks to retrofit existing infrastructure. Full system replacement is expensive and slow. No-code AI monitoring layers can be deployed on top of legacy security infrastructure in weeks, delivering immediate visibility improvements without requiring a multi-year modernization program.

How RevolutionAI Helps Organizations Close the Physical AI Security Gap

RevolutionAI's security practice was built on a foundational premise: that the traditional separation between cybersecurity and physical security is a dangerous fiction in an era of AI-enabled hardware. The Fort Campbell drone theft is exhibit A. The stolen assets are simultaneously physical objects and digital intelligence repositories. Protecting them requires a unified security model — and that's exactly what RevolutionAI delivers.

Our POC development services allow organizations to move from concept to working prototype in weeks. For AI asset tracking specifically, this means deploying a functional anomaly detection system against your actual inventory data, in your actual environment, with measurable results before you commit to full-scale implementation. Defense contractors and enterprise technology leaders consistently tell us that seeing a working proof of concept against their own data is the single most effective way to build internal consensus for security investment.

Our managed AI services extend that value over time. AI security systems are not set-and-forget deployments. Threat patterns evolve, device inventories change, and models require retraining to maintain accuracy. RevolutionAI's managed services team provides continuous monitoring, model performance oversight, and incident response support — ensuring that the AI layer protecting your hardware assets stays calibrated to your actual risk environment. From HPC hardware design that builds security into device architecture to no-code rescue of legacy security systems, our end-to-end AI consulting services are designed to prevent the reactive scrambles that make incidents like the Fort Campbell drone theft so costly and so avoidable.

Conclusion: The Fort Campbell Theft Is a Preview, Not an Anomaly

The theft of four Army drone systems from Fort Campbell should not be read as an isolated security failure at one Kentucky military installation. It should be read as a preview of what happens when the sophistication of AI-enabled hardware outpaces the sophistication of the systems designed to protect it. That gap exists not just in military contexts but across every enterprise deploying intelligent, mobile, data-carrying hardware — from commercial drone fleets to edge AI devices to autonomous industrial systems.

The technology to close this gap exists today. AI-powered asset tracking, behavioral anomaly detection, geofenced kill-switch protocols, and computer vision surveillance are mature, deployable capabilities. The barrier is not technological — it's organizational. It's the tendency to treat physical security and AI security as separate domains, to prioritize reactive investigation over proactive prevention, and to defer modernization until a breach forces the issue.

The Fort Campbell incident is a forcing function. For every technology leader reading this, the question isn't whether your organization could face a similar breach. The question is whether you'll address the gap before or after it happens. RevolutionAI is ready to help you answer that question the right way — before the headline writes itself.

Frequently Asked Questions

What happened with the Fort Campbell drones stolen incident?

Four Army drone systems were stolen from Fort Campbell, the Kentucky military base home to the 101st Airborne Division. The Army Criminal Investigation Command launched a formal investigation, and a $5,000 reward was offered for information leading to the recovery of the stolen systems. Suspects were identified relatively quickly, though the breach itself went undetected in real time, exposing significant gaps in the installation's asset monitoring capabilities.

When were the drones stolen from Fort Campbell?

The theft of four Army drone systems from Fort Campbell occurred and was publicly reported in a timeframe that prompted widespread search interest in terms like 'drones stolen from Fort Campbell' and 'four Army drone systems stolen from Kentucky base.' The Army CID became involved shortly after the theft was discovered. The relatively quick identification of suspects suggests the incident left a clear evidence trail that post-incident investigation was able to follow.

How were the Fort Campbell drones stolen without being detected in real time?

The theft likely exploited the gap between periodic human patrol cycles and the absence of continuous AI-powered surveillance integration. Modern military installations still rely heavily on manual monitoring processes, which create windows of vulnerability that a coordinated theft can exploit. AI-assisted systems capable of detecting anomalous movement and cross-referencing access data in real time could have flagged the breach as it occurred rather than after the fact.

Why is the theft of military drone systems a serious security concern beyond property loss?

Military drone systems contain embedded firmware, encrypted flight logs, sensor telemetry, and potentially AI-assisted targeting software, making them mobile repositories of sensitive intelligence. When these systems are stolen, adversaries or criminal organizations may attempt to extract and reverse-engineer that data, creating an intelligence breach that extends far beyond the physical asset loss. This is why incidents like the Fort Campbell drone theft are treated as potential national security matters, not simply property crimes.

What is the Army doing to recover the stolen drone systems from Fort Campbell?

The Army Criminal Investigation Command launched a formal investigation and offered a $5,000 reward for information leading to the recovery of the stolen drone systems. Suspects were identified in the investigation, suggesting that post-incident forensic processes including footage review, access log analysis, and witness interviews produced actionable leads. However, the reactive nature of this recovery approach highlights the need for real-time AI-powered asset tracking systems that could prevent or immediately flag such thefts.

Could AI technology have prevented the Fort Campbell drone theft?

AI-powered security tools including computer vision surveillance, anomaly detection, and real-time access log cross-referencing could have significantly reduced the breach window that allowed the theft to occur undetected. These systems can monitor restricted areas continuously, flag unusual after-hours activity, and surface alerts in seconds rather than waiting for a human patrol to discover a discrepancy. The fact that suspects were identified quickly after the fact suggests the evidence was available in real time, but no automated system was in place to act on it immediately.